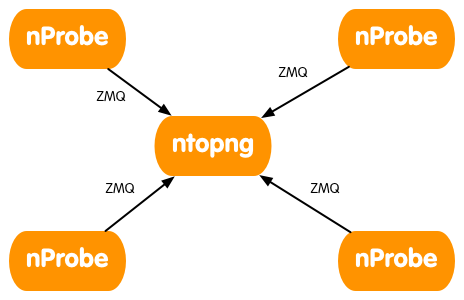

As you know via ZMQ you can use ntopng as collector for nProbe instances. You can decide to merge all probes into one single ntopng interface (i.e. all the traffic will be merged and mixed) or to have an interface per probe.

-

- Start the remote nProbe instances as follows

-

[host1] nprobe --zmq "tcp://*:5556" -i ethX

-

[host2] nprobe --zmq "tcp://*:5556" -i ethX

-

[host3] nprobe --zmq "tcp://*:5556" -i ethX

-

[host4] nprobe --zmq "tcp://*:5556" -i ethX

-

- If you want to merge all nProbe traffic into a single ntopng interface do:

-

ntopng -i tcp://host1:5556,tcp://host2:5556,tcp://host3:5556,tcp://host4:5556

-

- If you want to keep each nProbe traffic into a separate ntopng interface do:

-

ntopng -i tcp://host1:5556 -i tcp://host2:5556 -i tcp://host3:5556 -i tcp://host4:5556

-

- Start the remote nProbe instances as follows

Always remember that is ntopng that connects to nProbe instances and polls flows and not the other way round as happens with NetFlow for instance.

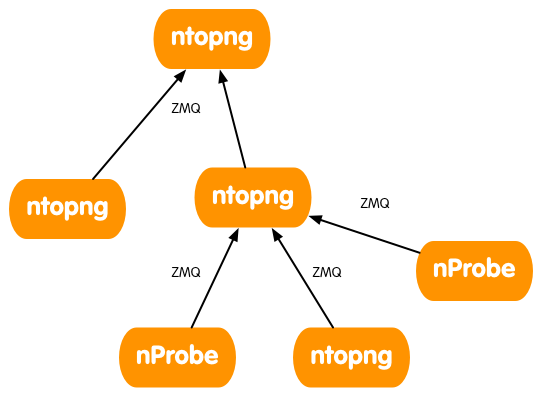

Now suppose that you want to create install a ntong instance on each of your remote locations, and want to have a ntopng instance that merges the traffic coming from various locations as depicted below.

In order to do this you can use the -I parameter in ntopng to tell a specific ntopng instance to create a ZMQ endpoint to which another ntopng instance can poll traffic flows. Note that you can create this hierarchy by mixing nProbe and ntopng instances, or by using just ntopng instances (this in case you do not need to handle NetFlow/sFlow flows).

In order to do this you can use the -I parameter in ntopng to tell a specific ntopng instance to create a ZMQ endpoint to which another ntopng instance can poll traffic flows. Note that you can create this hierarchy by mixing nProbe and ntopng instances, or by using just ntopng instances (this in case you do not need to handle NetFlow/sFlow flows).

You can create a hierarchy of ntopng’s (e.g. on a star topology, where you have many ntopng processes on the edge of a network and a central collector) as follows:

-

- Remote ntopng’s

- Host 1.2.3.4

ntopng -i ethX -I "tcp://*:3456"

- Host 1.2.3.5

ntopng -i ethX -I "tcp://*:3457"

- Host 1.2.3.6

ntopng -i ethX -I "tcp://*:3458"

- Host 1.2.3.4

- Central ntopng

-

ntopng -i tcp://1.2.3.4:3456 -i tcp://1.2.3.5:3457 -i tcp://1.2.3.6:3458

-

- Remote ntopng’s

Note that:

- On the central ntopng you can also add “-i ethX” if you want the central ntopng to monitor a local interface as well.

- The same ntopng instance (this also applies to nProbe) via -I can serve multiple clients. In essence you can create a fully meshed topology and not just a tree topology.

- Data encryption can be enabled as explained in the Data Encryption section of the User’s Guide to create secure communication channels between nprobe and ntopng, or ntopng and other ntopng instances.

You can ask yourself why all this is necessary. Let’s list some use cases:

- You have a central office with many satellite offices. You can deploy a ntopng instance per satellite office and have a single view of what is happening in all branches.

- You have a more layered network where each regional office has under it other remote sub-offices.

Please note that the deeper is your hierarchy, the more (likely) the central ntopng will receive traffic from remote peers. So this topology is necessary only if you want to see in realtime what is happening in your network by merging traffic into various locations. If this is not your need, but you just need to supervise the traffic on each remote office from a central location, you can avoid sending all your traffic to the central ntopng and access each remote ntopng instance via HTTP without having to propagate traffic onto a sophisticated hierarchy of instances.

We hope you can use this new ntopng feature to help you solve sophisticated monitoring tasks. We are aware that with these topologies, in particular when used over the Internet, ZMQ might expose your information to users that are not suppose to see it. We are working at adding authentication in ZMQ so that you can set a limit to the users that are able to connect to ntopng/nProbe via ZMQ. Stay tuned.