PF_RING ZC is ntop’s high-speed zero-copy technology for high speed packet capture and processing. Until now ZC supported 10/40/100 Gbit adapters from Intel based on ASIC chips, in addition to the FPGA-based 100 Gbit adapters already supported by PF_RING including Accolade/Napatech/Silicom.

This post is to announce a new ZC driver, known as mlx, supporting a new family of 100 Gbit ASIC-based adapters, this time from Mellanox/NVIDIA, including ConnectX-5 and ConnectX-6 adapters.

The supported ConnectX adapters from Mellanox, in combination with the new mlx driver, demonstrated to be capable of high performance, by letting our applications to scale up to 100 Gbps with worst case traffic, and flexibility, with support for hardware packet filtering, traffic duplication and load-balancing as we will see later in this post. All this in addition to interesting and useful features like nanosecond hardware timestamping.

Before diving into details of Mellanox support, we want to list the main differences of this ZC driver with respect to all other adapters, in fact Mellanox NICs can be logically partitioned into multiple independent virtual ports, typically one per application. This means for instance that:

- You can start cento and n2disk on top of the same Mellanox adapter port, and nProbe Cento can tell the adapter to implement 8 queue RSS for its virtual adapter, while n2disk can use its virtual adapter in single queue to avoid shuffling packets.

- Traffic duplication: you can use the adapter to natively implement in-hardware packet duplication (in the above example both nProbe Cento and n2disk receive the same packets that have been duplicated in hardware). This is possible as each virtual adapter (created when an application opens a Mellanox NIC port) receives a (zero) copy of each incoming packet.

- Packet Filtering: as every application opens a virtual adapter, each application can specify independent in-hardware filtering rules (ip to 32k per virtual adapter). This means for instance that cento could instruct the adapter to receive all traffic, while n2disk could discard in-hardware, using a filtering rule, all traffic on TCP/80 and UDP/53 as this is not relevant for the application.

All this described above happens in hardware, and you can start hundred of applications on top of the same adapter port, each processing a portion or all the traffic, this based on the specified filtering rule. Please note that what is just described is per port, meaning that the same application can open different virtual adapter ports with different configurations. Below in this post you will read more about this feature available on the ZC driver for Mellanox.

After this overview, it is now time to dig into the details for learning howto use ZC on top of Mellanox NICs.

Configuration

In addition to the standard pfring package installation (which is available by configuring one of our repositories at packages.ntop.org), the mlx driver requires the Mellanox OFED/EN SDK to be downloaded and installed from the Download section on the Mellanox website.

cd MLNX_OFED_LINUX-5.4-1.0.3.0-ubuntu20.04-x86_64 ./mlnxofedinstall --upstream-libs --dpdk

ConnectX-5 and ConnectX-6 adapters are supported by the driver, however there is a minimum firmware version which is recommended for each adapter model. Please check the documentation for an updated list of supported adapters and firmwares. This is the main difference with respect to other drivers: you will not find a dkms package (e.g. ixgbe-zc-dkms_5.5.3.7044_all.deb) as with Intel to install, but once you have installed the Mellanox SDK as described belo, PF_RING ZC will be able to operate without installing any ZC driver for the Mellanox.

After installing the SDK, it is possible to use the pf_ringcfg tool part of the pfring packet to list the installed devices and check the compatibility.

apt install pfring pf_ringcfg --list-interfaces Name: eno1 Driver: e1000e RSS: 1 [Supported by ZC] Name: eno2 Driver: igb RSS: 4 [Supported by ZC] Name: enp1s0f0 Driver: mlx5_core RSS: 8 [Supported by ZC] Name: enp1s0f1 Driver: mlx5_core RSS: 8 [Supported by ZC]

The same tool can be used to configure the adapter: this tool loads the required modules, configures the desired number of RSS queues, and restarts the pf_ring service.

pf_ringcfg --configure-driver mlx --rss-queues 1

The new mlx interfaces should be now available in the applications. The pfcount tool can be used to list them.

pfcount -L -v 1 Name SystemName Module MAC BusID NumaNode Status License Expiration eno1 eno1 pf_ring B8:CE:F6:8E:DD:5A 0000:01:00.0 -1 Up Valid 1662797500 eno2 eno2 pf_ring B8:CE:F6:8E:DD:5B 0000:01:00.1 -1 Up Valid 1662797500 mlx:mlx5_0 enp1s0f0 mlx B8:CE:F6:8E:DD:5A 0000:00:00.0 -1 Up Valid 1662797500 mlx:mlx5_1 enp1s0f1 mlx B8:CE:F6:8E:DD:5B 0000:00:00.0 -1 Up Valid 1662797500

pfcount can also be used to run a capture test, using the same interface name reported by the list.

pfcount -i mlx:mlx5_0

If multiple receive queues (RSS) are configured, the pfcount_multichannel tool should be used to capture traffic from all queues (this is using multiple threads).

pfcount_multichannel -i mlx:mlx5_0

Performance

During the tests we ran in our lab using a Mellanox ConnectX-5 on an Intel Xeon Gold 16-cores @ 2.2/3.5 GHz, this adapter demonstrated to be capable of capturing more than 32 Mpps (20 Gbps with worst-case 60-byte packets, 40 Gbps with an avg packet size of 128 bytes) on a single core, and scale up to 100 Gbps using 16 cores by enabling RSS support.

What is really interesting is the application performance, in fact some initial test with nProbe Cento, the 100 Gbit NetFlow probe part of the ntop suite, shown that it is possible to process 100 Gbps worst-case traffic (small packet size) using 16 cores, 40 Gbps using just 4 cores. Please note that those performance highly depend on the traffic type (less cores are required for a bigger average packet size for instance) and can change according to the input and the application configuration.

Packet transmission demonstrated to be quite fast as well in our tests, delivering more than 16 Mpps per core, and scaling linearly with the number of cores when using multiple queues (e.g. 64 Mpps with 4 cores).

Flexibility

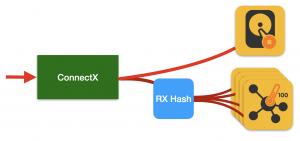

An interesting feature of this adapter is the flexibility it provides when it comes to traffic duplication and load-balancing. In fact, as opposite to ZC drivers for Intel and most FPGA adapters (Silicom Fiberblaze is probably the only exception), access to the device is non exclusive, and it is possible to capture (duplicate) the traffic from multiple applications. In addition to this, it is also possible to apply a different load-balancing (RSS) configuration for each application. As an example, this allows us to run, on the same traffic, nProbe Cento and n2disk, where nProbe Cento is configured to load-balancing the traffic to N streams/cores, while n2disk receives all the traffic in a single data stream.

In order to test this configuration, RSS should be enabled when configuring the adapter with pf_ringcfg, by configuring the number of queues that should be used by cento to load-balance the traffic to multiple threads.

pf_ringcfg --configure-driver mlx --rss-queues 8

Run cento by specifying the queues and the cores affinity.

cento -i mlx:mlx5_0@[0-7] --processing-cores 0,1,2,3,4,5,6,7

Run n2disk on the same interface. Please note n2disk will configure the socket to use a single queue as a single data stream is required for dumping PCPA traffic to disk. Please also note that packet timestamps are provided by the adapter and can be use to dump PCAP files with nanosecond timestamps.

n2disk -i mlx:mlx5_0 --disable-rss -o /storage -p 1024 -b 8192 --nanoseconds --disk-limit 50% -c 8 -w 9

Hardware Filtering

The last, but not least, feature we want to mention in this post is the hardware filtering capability. The number of filters is pretty high (64 thousand rules on ConnectX-5) and flexible for an ASIC adapter. In fact it is possible to:

- Assign a unique ID that can be used to add and remove specific rules at runtime.

- Compose rules by specifying which packet header field (protocol, src/dst IP, src/dst port, etc) should be used to match the rule.

- Define drop or pass rules.

- Assign a priority to the rule.

What is interesting here, besides the flexibility of the rules themselves, is the combination of traffic duplication and rule priority, which is applied across sockets. In fact, just to mention an example, two applications capturing traffic from the same interface and setting a pass rule which is matching the same traffic and with the same priority, will both receive the same traffic. Instead, only the application which is setting the higher priority on the rule, would receive the traffic otherwise.

Please refer to the documentation for learning more about the filtering API and sample code.

License

The ZC driver for Mellanox requires a license per-port similar to what happens with Intel adapters. The price of Mellanox driver is the same of the Intel ZC, even though much richer in terms of features to what you can do with Intel. You can purchase driver licenses online from the ntop shop or from an authorised reseller.

Final Remarks

In summary a sub 1000$ Mellanox NIC can achieve the same performance of FPGA-based adapters at a fraction of the cost, and provide many more features and freedom thanks to the concept of virtual adapter.

Enjoy ZC for Mellanox !